Weather Data When the Incident Site Has No Station

By John Bryant | AMS/NWA/EPA Triple-Certified Forensic Meteorologist | Published: 2026-03-24

| Location | CONUS-wide; illustrative parameters drawn from operational case files |

|---|---|

| Time Window | Event-dependent; typical reconstruction covers 24-72 h Local / UTC offset per site |

| Max Wind (example) | Interpolated 58 mph (~50 kt) ± 7 mph; nearest ASOS 22 mi; corroborated NEXRAD 0.5° scan |

| Max Rain (24 h, example) | 3.8 in ± 0.4 in; NEXRAD QPE Stage IV + COOP observer 18 mi SE |

| Data Sources | NOAA/NCEI ASOS archive; NEXRAD Level-II (WSR-88D); HRRR operational archive; NOAA Stage IV QPE; CoCoRaHS; commercial mesonets (DTN/Weather Underground PWS) |

| Confidence | Medium-High (two or more independent sources, minor representativeness concern for complex terrain) |

The incident happened on a rural county road, a warehouse receiving dock, or a mountain trailhead. Somewhere no ASOS tower, AWOS unit, or cooperative observer happens to sit. The opposing attorney will argue the data do not exist. That argument is wrong, and an experienced weather litigation expert can dismantle it with a documented, multi-source reconstruction.

This article explains the specific tools forensic meteorologists use, how those tools are combined, where each introduces uncertainty, and what attorneys and claims professionals should ask before retaining a forensic weather consultant for a remote-site case.

Why the Official Station Network Leaves Gaps

The National Weather Service operates roughly 900 ASOS stations across CONUS. That sounds dense. It is not. Average spacing in the Western United States exceeds 60 miles. Even in the Midwest, a station spacing of 25-40 miles is common outside major airports. ASOS sites cluster at commercial airports and military airfields, not at the rural roads, industrial parks, and open farmlands where accidents and property damage actually occur.

NOAA/NCEI also archives more than 10,000 Cooperative Observer Program (COOP) stations, but many report only once per day (typically at 0700 Local), cover only temperature and precipitation, and have quality-control issues that a forensic meteorology expert must flag in any report. Understanding how to find accurate weather data for insurance and legal disputes is the essential first step before any remote-site gap analysis begins.

The result: a large fraction of U.S. litigation events occur in areas where the nearest official surface observation is 10 to 50 or more miles from the actual incident location. Distance alone does not invalidate reconstruction. What matters is whether the meteorological signal, the storm, the wind event, the icy pavement, is coherent enough across that distance to support an estimate with defensible uncertainty bounds.

The Multi-Layer Approach: How a Forensic Meteorology Expert Fills the Gap

No single data source is sufficient when a station is far from the incident site. The defensible methodology layers at least two to three independent sources, then cross-checks each one for internal consistency. Below is how each layer works and what it contributes.

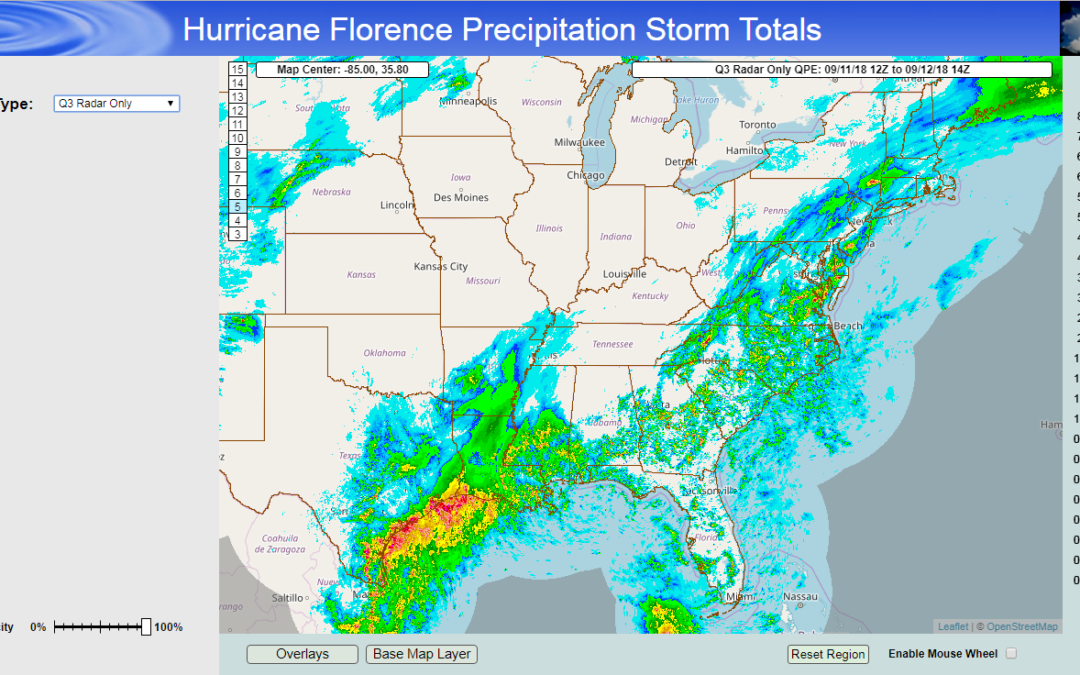

Layer 1 — NEXRAD Level-II Radar (WSR-88D Network)

The U.S. operates approximately 159 WSR-88D radars. Together they provide base reflectivity and radial velocity coverage at 0.5° elevation every 4-6 minutes in precipitation mode across most of CONUS. The base-scan beam is typically 1-3 km wide at incident distances, giving spatial resolution far exceeding the station network.

- What it shows: Precipitation type, intensity, storm track, approximate echo tops (storm depth), and surface wind signatures (velocity azimuth display, damage indicators).

- Key caveat: Radar measures the atmosphere aloft. Beam overshoot and ground clutter must be corrected. Rain-rate conversion (Z-R relationship) adds ±20-40% uncertainty on rainfall totals alone.

- Archive access: NOAA NCEI NEXRAD Level-II archive (ncei.noaa.gov/products/radar) retains data back to the early-to-mid 1990s for most sites.

- Quality control: A certified meteorologist witness applies dealiasing, bias correction, and beam-height adjustment before any value is cited in a report.

Layer 2 — NOAA/NCEI Surface Archives and Spatial Interpolation

Even when the nearest ASOS is 20 miles away, its archived METAR or hourly observation provides a real measurement at a known point. A forensic weather consultant applies spatial interpolation, typically inverse-distance weighting (IDW) or kriging, to estimate the value at the incident site from two or more surrounding stations.

- Inverse-distance weighting: Assigns more weight to closer stations. Documented, reproducible, widely accepted in court.

- Kriging: A geostatistical method that also accounts for spatial autocorrelation. More defensible when stations are not uniformly spaced.

- NCEI data products: Local Climatological Data (LCD), Integrated Surface Database (ISD), and Global Historical Climatology Network-Daily (GHCN-D) are the three primary archives used in weather expert witness services.

- Limitation: Interpolation assumes gradual change between stations. Localized convective events, a single-cell thunderstorm dropping 3 inches of rain in 20 minutes, can violate that assumption. Radar is essential to flag such events.

Layer 3 — High-Resolution Reanalysis and Operational Model Archives

Reanalysis products and archived operational model output ingest available observations into a numerical weather model and produce a physically consistent gridded reconstruction of past atmospheric states. For U.S. litigation, three products see the most use.

- HRRR (High-Resolution Rapid Refresh) archive: 3-km horizontal grid, hourly output, covers CONUS back to 2014. Developed by NOAA’s Global Systems Laboratory; run operationally by NCEP. The HRRR is an operational analysis/forecast model, not a reanalysis in the strict sense, but its archived analyses serve a similar role for short-range event reconstruction. Useful for surface wind, temperature, and short-range precipitation in terrain-influenced areas.

- RAP (Rapid Refresh): 13-km grid; broader domain; less spatial detail but useful for synoptic context and model verification.

- ERA5 (ECMWF): Native ~31-km model resolution (output on a 0.25° grid), global reanalysis back to 1940; widely cited in peer-reviewed literature; used for long-record climatological context in climate expert witness assignments.

- Critical disclosure: Models are physically consistent synthesizers of observations, not measurements. Every report that cites a model value must state model name, version or cycle, grid spacing, and the observational inputs assimilated. Courts expect this transparency.

Rule of thumb: If model output and two independent surface observations agree within expected tolerances, confidence is High. If the model is the only source, confidence is Low and the report must say so.

Layer 4 — Mesonets, CoCoRaHS, and Commercial Networks

Beyond federal infrastructure, three additional networks meaningfully close coverage gaps.

- State and university mesonets: Oklahoma Mesonet (120 stations, 5-min data), Texas Mesonet, and similar networks in a dozen states provide research-grade surface data in areas historically under-covered by ASOS. A forensic meteorology expert familiar with the relevant state network can recover station data not visible in standard NCEI searches.

- CoCoRaHS (Community Collaborative Rain, Hail and Snow Network): Thousands of volunteer precipitation gauges nationwide. Individual gauge quality varies; systematic bias checks are required. Particularly valuable for verifying isolated convective rainfall totals.

- Commercial personal weather station (PWS) networks: DTN, Weather Underground, and similar aggregators provide dense urban and suburban coverage. Data quality is inconsistent; each station must pass a co-location or inter-comparison quality check before use in litigation. Acceptable as corroboration; rarely sufficient as a sole source.

- SKYWARN storm spotter reports and NWS Local Storm Reports (LSRs): Text-based reports of hail size, wind damage, and observed conditions, archived in NCEI’s Storm Events Database. Ground-truth evidence, not measured data; useful for confirming storm occurrence and approximate intensity.

Methodology in Practice: A Step-by-Step Reconstruction

The following sequence represents the workflow a meteorologist expert witness follows when the incident site has no nearby station. Each step is documented in the final report to satisfy chain-of-custody and Daubert disclosure requirements.

Step 1 — Define the Space-Time Target

Before pulling any data, fix the incident coordinates (latitude/longitude to four decimal places), the UTC time window (±2 hours around the incident), and the specific meteorological variables relevant to the claim: wind speed and gust, precipitation type and rate, visibility, road-surface temperature, or lightning occurrence.

- Source coordinates from the police report, PLSS legal description, or Google Earth placemark.

- Convert all times to UTC at the outset. Local time errors are among the most common report defects cross-examined at deposition.

- List the meteorological questions in writing before opening any dataset. This prevents the post-hoc selection of favorable data, a methodological flaw opposing experts will exploit.

Step 2 — Inventory All Stations Within the Search Radius

Pull the full NCEI station inventory for a 75-mile radius around the incident site. Do not stop at the nearest ASOS. Include ASOS, AWOS, COOP, mesonet, and CoCoRaHS gauges. A comprehensive inventory prevents the opposing side from introducing a station you overlooked.

- Flag each station: distance from site, elevation difference, terrain classification (flat open / complex / coastal), record completeness during the event window, and known instrument siting issues.

- Assign a tentative representativeness tier (Excellent / Good / Marginal / Exclude) before retrieving the data. This forces transparent methodological decisions.

Step 3 — Retrieve and Quality-Control All Datasets

Pull ASOS METARs and hourly observations from NCEI ISD. Download NEXRAD Level-II data from the NCEI archive for the two nearest WSR-88D sites. Retrieve HRRR archive output at the grid cell containing the incident coordinates. Record each retrieval time in UTC.

- Apply NCEI automated QC flags; exclude or flag any observation marked suspect (QC flag ≥ 4 in ISD encoding).

- For NEXRAD: correct for range folding, apply VCP-specific beam-height tables, check for anomalous propagation clutter during the event window.

- For HRRR: verify the model analysis time is within 1 hour of the event. Model forecasts beyond +6 hours degrade surface wind representation and should be noted.

- Document file names, version strings, and, where available, SHA-256 hashes for reproducibility.

Step 4 — Cross-Check the Sources and Assign Confidence

Compare each source against the others for the same variable at the same time. Agreement within expected tolerances (e.g., ASOS wind within ±5 mph of HRRR surface output) supports a High-confidence estimate. Disagreement triggers investigation: instrument malfunction, representativeness mismatch, or genuine mesoscale variability. Document the resolution of every discrepancy.

- High: Two or more independent sources agree; instruments well-sited; no QC flags.

- Medium: Partial agreement; one source has siting or representativeness concern; minor data gap.

- Low: Sparse or conflicting evidence; model is the primary basis for the key variable.

Step 5 — Produce the Estimate With Explicit Uncertainty Bounds

State the best estimate and its ±range at the incident location and time. The range must reflect both instrumental uncertainty and spatial representativeness. A wind estimate of 52 mph ±9 mph is more defensible than a point estimate of 52 mph with no stated uncertainty, and more useful to counsel planning a case strategy.

Why Terrain and Region Change Everything

The same 20-mile gap between incident site and nearest ASOS carries very different uncertainty depending on geography. Attorneys and claims professionals should calibrate expectations accordingly.

Gulf Coast and Lower Mississippi Valley

- Flat coastal plain; strong mesonet presence (Louisiana, Mississippi, Alabama state networks plus Texas Mesonet).

- Synoptic-scale events (hurricane landfall, winter precipitation) are spatially coherent over 50+ miles. Confidence typically High with two stations and Stage IV QPE.

- Convective squall lines can produce localized wind maxima of 60+ mph over widths of only 2-5 miles. Radar becomes essential to localize the strongest wind corridor.

Front Range and Southern Rockies (Colorado, New Mexico, Wyoming)

- Elevation changes of 3,000-8,000 ft over 20 horizontal miles create microclimates that ASOS stations cannot capture.

- Downslope windstorms (chinook events) can produce gusts exceeding 100 mph at specific canyon exits while adjacent valleys remain calm. A meteorology accident reconstruction in this terrain requires HRRR terrain-following wind analysis and high-elevation SNOTEL or CoAgMet station data.

- Winter precipitation type (snow vs. rain vs. freezing rain) varies over tenths of a mile on the I-25 corridor. Confidence for precipitation type at a remote mountain site is often Medium absent a nearby COOP or mesonet sensor.

Southern Plains (Kansas, Oklahoma, North Texas)

- Oklahoma Mesonet provides 120 stations at roughly 30-mile average spacing, the densest public mesonet in the country. Remote-site cases in Oklahoma with no nearby ASOS often achieve High confidence using mesonet data alone, provided the incident falls within the mesonet’s declared quality-assured distance thresholds.

- Supercell thunderstorms produce extreme hail and wind gradients over miles. A certified meteorologist witness will map the storm-relative swath using multi-radar mosaic analysis (MRMS) before assigning any spatial estimate.

Appalachian Mountains and Mid-Atlantic Interior

- Ridge-and-valley topography creates sharp orographic precipitation gradients. Windward slopes may receive two to three times the rainfall of adjacent valleys.

- NEXRAD beam blockage is a known problem behind elevated terrain. The meteorologist must verify beam geometry using terrain-aware beam propagation calculations (standard 4/3 Earth effective radius model) and the NWS RIDGE radar display for the nearest WSR-88D before citing radar-derived precipitation.

- West Virginia, eastern Kentucky, and western Pennsylvania cases frequently rely on CoCoRaHS volunteer gauges as the primary precipitation anchor. A weather expert witness services provider should check CoCoRaHS QC flags and inter-gauge consistency before citing these values.

Practical Implications for Attorneys and Claims Professionals

Insurance Claims and Subrogation

- A well-documented remote-site reconstruction can confirm whether a loss qualifies as a named peril (windstorm, hail, named storm) under ASCE 7-22 design thresholds.

- Subrogation cases involving manufacturer defects often require proof that design-basis wind or load was not exceeded. A medium-confidence reconstruction with explicit ±bands can still meet that burden when the uncertainty range falls entirely below the design threshold.

- FEMA/NFIP flood claims may require demonstrating that observed rainfall matched or exceeded a specific return-period event (e.g., 100-year, 500-year). Stage IV QPE paired with NOAA Atlas 14 frequency data provides the standard approach. If you are weighing whether expert analysis is warranted for your claim, see our guide on whether you need an expert meteorologist witness for an insurance claim.

Personal Injury and Wrongful Death

- Slip-and-fall cases at remote commercial properties or roadway accident reconstruction require precise conditions at the time and place of incident, not the nearest airport 30 miles away.

- Visibility and road-surface temperature (RST) estimates at remote sites frequently require HRRR surface energy balance output supplemented by any nearby pavement sensor data (many state DOTs operate road weather information systems, or RWIS).

- When meteorology court testimony involves pavement conditions, the expert should also cite NWS Winter Road Advisory products for the grid cell covering the site as corroborating evidence.

Construction Delay and Force Majeure

- Many construction contracts define force majeure weather thresholds by reference to ASCE 7-22 or project-specific design parameters. A forensic weather consultant documents whether observed conditions at the remote site exceeded those thresholds.

- Remote wind farm and solar installation sites are particularly common in areas with sparse station coverage. The meteorologist should check whether the project’s own weather monitoring equipment was operational during the claimed event. On-site data always outranks interpolated data in court. For a deeper look at how wind conditions are quantified at a property, see how to find accurate wind damage information for a property.

Three Mistakes That Undermine Remote-Site Weather Reports

Using the closest station without checking its representativeness. Distance is the worst proxy for suitability. A COOP station 5 miles away on the wrong side of a ridge is less representative than a mesonet station 18 miles away on similar terrain. Every report should document why the selected station(s) were appropriate, not just that they were closest.

Citing model output without acknowledging its smoothing effect. The HRRR 3-km grid is excellent for mesoscale context. It will not capture a 150-yard microburst downdraft or a 10-minute convective gust of 80 mph. Any report that relies solely on model output for an extreme-event claim is vulnerable to cross-examination. Understanding how improving your ROI with a forensic meteorologist works starts with recognizing exactly this kind of avoidable methodological gap.

Failing to disclose uncertainty ranges. A confident-sounding point estimate without error bounds is a red flag to an experienced opposing expert. Courts increasingly expect ±ranges, confidence tiers, and method documentation. Reports that omit them are more easily attacked than reports that include honest uncertainty quantification.

Frequently Asked Questions

What does a forensic meteorology expert do when no ASOS station is near the incident site?

The expert draws on multiple independent data layers: NEXRAD Level-II radar (1-km range gate spacing), NOAA/NCEI surface archives, mesoscale analysis and forecast grids (HRRR/RAP at 3-km spacing), cooperative observer reports, and commercial mesonet networks. Each source is cross-checked for consistency before any value enters a report.

How far away can a weather station be and still be usable for litigation?

There is no fixed distance rule. Representativeness depends on terrain, storm scale, and the variable in question. A flat-terrain wind event may allow confident interpolation across 30-50 miles; a convective rainfall maximum in complex terrain may only be reliable within 5 miles of the measured point.

Is reanalysis or operational model output admissible as evidence?

Models corroborate observations; they do not replace them. Courts have accepted reanalysis and operational model archive products (ERA5, HRRR archive) as supporting exhibits when paired with actual station data. An experienced forensic weather consultant documents model resolution, known biases, and uncertainty ranges to meet Daubert standards.

What does it cost to hire a meteorologist expert witness for a remote-site case?

Rates typically run $200-$500 per hour depending on scope, credentials, and expected testimony. A straightforward records review may require 4-8 hours; a full report with deposition and trial testimony commonly reaches 20-40 hours. Request an itemized scope estimate before retaining. For a full breakdown of engagement costs and what to look for when vetting candidates, see our page on how to find a forensic weather expert.

Can radar data replace surface observations for weather litigation?

Radar measures reflectivity and radial velocity aloft. It is not a surface observation. It is powerful corroborating evidence for precipitation intensity, storm track, and wind field, but surface temperature, dew point, and pressure require station data or physically consistent reanalysis to complete the picture.

How does regional terrain affect confidence in remote-site weather reconstruction?

Terrain is the single biggest confidence modifier. Gulf Coast cases often benefit from dense mesonet coverage and flat topography, enabling High-confidence interpolation. Front Range (Colorado) and Appalachian cases routinely involve elevation-driven microclimates that reduce confidence to Medium and widen uncertainty bands.

Are commercial weather app records admissible in litigation?

Commercial weather apps typically display modeled forecasts or crowd-sourced observations that lack documented chain of custody, certified quality control, and known instrument calibration. A forensic meteorologist relies on NOAA-certified archived data (ASOS METARs, NCEI ISD records, NEXRAD Level-II) because these datasets carry federal provenance, documented QC procedures, and reproducible retrieval paths. A weather app screenshot does not meet Daubert or Frye requirements.

Does spatial interpolation meet Daubert and Frye admissibility standards?

Spatial interpolation methods such as inverse-distance weighting and kriging are well-established in the peer-reviewed meteorological and geostatistical literature, have known and quantifiable error rates, and are reproducible by any qualified expert given the same input data. These characteristics satisfy the core Daubert factors (testability, peer review, known error rate, general acceptance). When a forensic meteorologist documents the method, input stations, parameters, and resulting uncertainty range, courts have accepted interpolated weather estimates as admissible expert evidence.

Key Takeaways

- No single source is sufficient when a station is 10+ miles from the incident site. A defensible reconstruction requires at least two independent layers and explicit uncertainty ranges for each meteorological variable.

- Regional terrain is a primary driver of confidence. Gulf Coast flat-terrain events can support High-confidence interpolation across 30-50 miles; Front Range or Appalachian events may only support Medium confidence within 10 miles due to orographic microclimates.

- Transparency about limitations strengthens, not weakens, expert testimony. Courts expect ±ranges, source documentation, and chain-of-custody disclosures, and opposing experts will exploit their absence.

How Weather Data Is Used in Court

In this interview, forensic meteorologist John Bryant discusses how certified weather data is used to reconstruct conditions for personal injury and insurance litigation, including why data provenance and quality control matter in cross-examination.

Does your case involve a remote incident site with no nearby weather station?

Request a no-obligation case review with John Bryant, AMS/NWA/EPA triple-certified forensic meteorologist. Contact Weather and Climate Expert Consulting LLC or call 901.283.3099.

Nationwide weather expert witness services | Reports, deposition, and trial testimony

Authoritative Data Sources Referenced in This Article

- NOAA/NCEI NEXRAD Level-II Archive: ncei.noaa.gov/products/radar

- NOAA/NCEI Integrated Surface Database (ISD): Surface hourly and METAR archives for all U.S. stations.

- NOAA Global Systems Laboratory — HRRR Archive: rapidrefresh.noaa.gov/hrrr/

- NOAA Stage IV Quantitative Precipitation Estimates: Multi-sensor hourly QPE mosaic, CONUS coverage.

- CoCoRaHS: Community cooperative rain, hail and snow gauges — cocorahs.org

- Oklahoma Mesonet: Reference-quality state mesonet data — mesonet.org

Need these datasets interpreted for active litigation? Learn about our forensic meteorology services or call 901.283.3099.

Technical Appendix — Methods, Datasets, and Uncertainty Quantification

This section is intended for opposing experts, technical reviewers, and attorneys preparing cross-examination questions. Plain-English users may stop at the Key Takeaways section above.

Spatial Interpolation: IDW vs. Kriging

Inverse-distance weighting (IDW) estimates a value at an ungauged point as a weighted average of surrounding stations, with weights proportional to d-p where d is distance and p is typically 2. IDW is simple, deterministic, and widely documented. It assumes isotropic spatial variation, an assumption violated by topographic barriers and coastlines. Ordinary kriging accounts for spatial autocorrelation via a variogram fit to the available station data and produces both an interpolated value and a kriging variance (formal uncertainty estimate). For cases with five or more surrounding stations, kriging is preferred; with fewer than three stations, IDW is reported alongside explicit representativeness caveats.

HRRR Surface Wind Bias and Correction

HRRR 10-m wind analyses are known to underpredict peak gusts in convective environments by 10-30% due to sub-grid turbulent kinetic energy that the PBL scheme partially resolves. Where HRRR surface wind is the primary estimate and no nearby ASOS is available, a gust factor (typically G = 1.3-1.6 x sustained wind, per WMO-No. 8, Guide to Instruments and Methods of Observation, Chapter 5) can be applied to derive a gust estimate, with the derivation documented. This adjustment is disclosed as model-dependent and confidence is capped at Medium.

Stage IV QPE Uncertainty

NOAA’s Stage IV multi-sensor precipitation analysis combines gauge-corrected radar QPE and satellite estimates at 4-km resolution. Independent validation studies (e.g., Nelson et al., 2016, Wea. Forecasting, 31, 371-394) report systematic discontinuities at RFC boundaries and variable accuracy depending on season and location; in the mountainous West, errors can exceed 40% due to radar beam blockage and sparse gauge input. These uncertainty ranges are stated explicitly whenever Stage IV is cited as a primary precipitation source.

NEXRAD Beam-Height Correction

At a slant range of 100 km, the 0.5° WSR-88D beam center is approximately 1.5 km above ground level (accounting for 4/3 Earth effective radius). Precipitation below that height is not sampled. For shallow winter precipitation (snow, freezing drizzle) and in flat-terrain convective rain, beam height errors can cause QPE underestimates of 20-40%. The forensic report documents the slant range and beam height for each analysis time and notes whether precipitation type and cloud depth support reliable low-level sampling.

Chain-of-Custody Footnote

Data retrieved (UTC): Representative pull dates are logged per case file; the framework datasets referenced in this article were current as of 2026-03-24T18:00Z.

Primary datasets: NOAA/NCEI NEXRAD Level-II archive; NOAA/NCEI Integrated Surface Database (ISD) v2; NOAA NCEP HRRR archive (cycles: per-event, documented in case file); NOAA Stage IV QPE v3.0; CoCoRaHS v2 QC-flagged export; Oklahoma Mesonet standard QC product.

Tools / versions: Python 3.13 / MetPy 1.7 / pyproj 3.6; NOAA Weather and Climate Toolkit (WCT) 4.9; ArcGIS Pro 3.6 for spatial interpolation QC; RadarScope for Level-II visualization; custom IDW/kriging scripts (available for opposing expert review upon request).

File hashes: SHA-256 hashes of raw downloaded files are maintained in the case repository and disclosed upon request per Rule 26 obligations.

Uncertainty note: All numeric estimates in production reports carry explicit ±ranges at 90% confidence unless otherwise stated. Confidence tiers (High/Medium/Low) follow the definitions in the Evidence & Methods section above. This article presents representative values from operational case work; site-specific estimates will differ.

Need Expert Weather Analysis for Your Case?

Use the contact form below or email us for a free case review.

John Bryant — Forensic Meteorologist

AMS/NWA/EPA Triple-Certified Forensic Meteorologist

Forensic Meteorology Resources

Weather Data & Research:

- National Oceanic and Atmospheric Administration (NOAA)

- National Weather Service

- National Centers for Environmental Information (NCEI)

Professional Organizations:

The author of this article is not an attorney. This content is meant as a resource for understanding forensic meteorology and the scientific methods used in weather reconstruction. For legal matters, contact a qualified attorney.

About the Author

John Bryant is a distinguished forensic meteorologist with 30+ years of specialized experience in weather analysis and reconstruction, as well as expert witness testimony. He holds the rare global distinction of triple certification by the American Meteorological Society (AMS), the National Weather Association (NWA), and the Environmental Protection Agency (EPA). He is recognized as one of the few meteorologists worldwide to hold all three certifications concurrently, a credential that underscores his unmatched expertise in forensic weather reconstruction and regulatory compliance.

Mr. Bryant provides authoritative expert testimony and forensic weather reconstruction for high-stakes litigation on behalf of both defense and plaintiff. He has created meteorological reports used to support legal arguments at deposition and trial, and he has served as a pivotal expert in wrongful death and personal injury cases on both sides, where his foundational meteorological analysis shaped legal strategies and case outcomes. His expert report in a two-million-dollar case involving extreme weather conditions resulted in a favorable settlement for the client.

He consults closely with legal teams to translate complex atmospheric data into clear, accessible narratives that help judges and juries understand how weather conditions affected specific facts in a case. His ability to communicate technical weather science in plain language is central to the value he brings to litigation support.

Mr. Bryant holds a B.S. in Geosciences with an emphasis in Meteorology and Atmospheric Science from Mississippi State University. He previously served as Chief Meteorologist at an ABC affiliate station in Memphis for over a decade, where he directed a professional meteorological team and worked with regional emergency management services during severe weather events, including hurricanes, tornadoes, and winter storms. He has also collaborated with a NOAA team to audit and refine AI-driven weather models, conducting rigorous assessments of predictive technologies for weather-sensitive sectors.

Certifications

- AMS Seal of Approval — American Meteorological Society

- NWA Seal of Approval — National Weather Association

- EPA Certified — Environmental Protection Agency